AI Ethics Guide

The complete framework for responsible, transparent and safe artificial intelligence.

Introduction

AI ethics defines how artificial intelligence can be safe, fair, transparent and aligned with human values. This guide serves as the central hub for all topics related to AI ethics, including regulation, bias, safety, surveillance, digital rights and accountability.

Related Pillars: Digital Safety, Neurotechnology, AI Trends

What AI Ethics Means in 2026

AI ethics in 2026 is no longer theoretical. It shapes real‑world systems, global regulation, industry standards and the design of AI models that influence billions of people.

Core Principles of AI Ethics

Transparency

Users must understand how AI systems make decisions and what data they rely on.

Fairness

AI must avoid discrimination based on race, gender, age, language or socioeconomic status.

Accountability

Organizations must be responsible for the outcomes of their AI systems.

Safety

AI must be robust, predictable and resistant to misuse or adversarial attacks.

Privacy

Data protection is essential, especially for sensitive biometric and behavioral data.

Algorithmic Bias, Fairness & Transparency

Bias emerges from imbalanced datasets, flawed metrics, opaque models and insufficient oversight. Ethical AI requires fairness techniques such as debiasing, balanced datasets, explainable AI and fairness metrics.

AI Safety & Risk Mitigation

AI systems introduce risks such as hallucinations, autonomous agent behavior, misinformation and security vulnerabilities. Safety protocols include red teaming, evaluations, alignment testing and layered safeguards.

Surveillance, Privacy & Digital Rights

AI‑driven surveillance can enable facial recognition, predictive policing and emotional profiling. Digital rights include privacy, the right to explanation, the right to contest automated decisions and cognitive autonomy.

Regulation & Global Standards

EU AI Act

The first comprehensive AI law defining risk categories, obligations and enforcement. The EU AI Act is the world's first comprehensive AI regulation, introducing a risk‑based framework, strict transparency rules, and enforcement mechanisms that apply across all 27 EU member states. It becomes fully applicable on August 2, 2026, with additional obligations rolling out in 2027.

1) Risk Categories (Annex I–III)

The Act classifies AI systems into four levels:

- Prohibited AI — social scoring, manipulative systems, biometric categorization based on sensitive traits.

- High‑Risk AI — HR systems, credit scoring, biometric identification, medical AI, education AI, critical infrastructure.

- Limited‑Risk AI — chatbots, generative AI, recommendation systems (require transparency).

- Minimal‑Risk AI — video games, spam filters (no obligations).

2) Transparency Requirements (Article 50)

All AI systems that interact with humans must:

- disclose that the user is interacting with AI

- label AI‑generated content (including deepfakes)

- apply watermarking or metadata tagging

- provide explanations when required by law

Effective date: August 2, 2026.

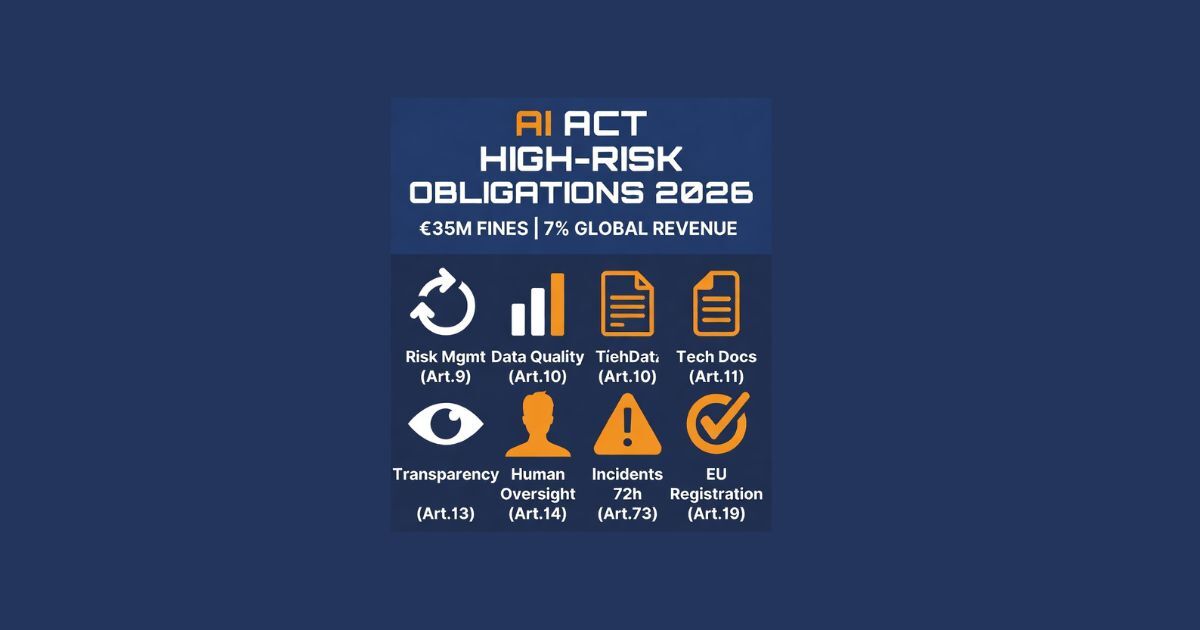

3) High‑Risk AI Obligations (Articles 8–29)

High‑risk systems must comply with:

- risk management

- high‑quality datasets

- technical documentation

- logging & monitoring

- human oversight

- cybersecurity

- post‑market monitoring

- conformity assessments

Full enforcement: August 2, 2027.

4) Enforcement & Penalties

- €35M or 7% global turnover — prohibited AI

- €15M or 3% global turnover — high‑risk violations

- €7.5M or 1% — transparency failures

Enforcement bodies:

- EU AI Office

- National supervisory authorities

- Notified bodies (for high‑risk systems)

5) Timeline (Full Breakdown)

- August 2, 2026 — General application + transparency rules

- August 2, 2027 — High‑risk AI obligations

- 2025–2027 — Sandboxes, standards, conformity assessments

Full timeline: EU AI Act — What Changes on August 2, 2026

UNESCO AI Ethics Framework

A global standard for responsible AI development and deployment.

OECD Principles

Guidelines for trustworthy AI across international borders.

NIST AI Risk Management Framework

A technical standard for evaluating and mitigating AI risks.

Ethics in Real‑World AI Systems

Explore how ethical principles shape real AI systems, regulation, scientific progress and global standards.

Blog Articles

All latest articles in one place:

Stay Updated

New articles are published regularly. Bookmark this page or subscribe for updates.

-

EU AI Act — What Changes on August 2, 2026

A breakdown of the key ethical and regulatory shifts coming with the EU’s updated AI rules. -

The Ethics of Artificial Intelligence

An overview of the core ethical questions shaping how AI should be designed, deployed and governed. -

Ethics and Technology: Comparing OpenAI, DeepMind & UNESCO

A comparison of AI institutions and how their ethical frameworks differ in principles and practice. -

UNESCO Sets Ethical Standards for Neurotechnology

A look at UNESCO’s global guidelines for responsible neurotechnology and human‑rights‑based innovation. -

The Ethics of AI Surveillance

A detailed examination of how the EU’s AI Act sets strict, rights‑based limits on biometric and algorithmic surveillance, contrasted with the U.S.’s reactive, crisis‑driven approach that still lacks clear boundaries. -

AI Agents, Panic and Real Risks

A grounded perspective on the real ethical risks of autonomous AI agents versus the hype and fear.